Why Offline AI-Voice Assistant is the Future

Our voice is the most beautiful and natural communication method we use every day. This is part-one of the blog on why offline AI-voice assistants are the future. I want to pitch why we need a safer approach to use at home or office and it can be a stepping stone to the future of Voice commerce.

Among US voice assistant users, 88.4% will engage with the tech on smartphones in 2023, according to Insider Intelligence’s forecast. This number is expected to rise to 89.7% by the end of the forecast period in 2027.(source: https://www.insiderintelligence.com/insights/voice-assistants/)

The rise of voice assistants has revolutionized the way we interact with technology. From smart speakers to voice-enabled smartphones, these assistants have made our lives easier by providing a convenient and intuitive interface for performing various tasks. However, with the increasing concerns over data privacy and security, it’s time to rethink the current approach to voice assistants and embrace a safer and more secure solution. In this blog post, we’ll explore the benefits of offline voice assistants and why they are the future of voice technology.

Why Offline Voice Assistants are the Future:

- Data Privacy and Security:

With the growing number of voice assistants in the market, there’s a rising concern over data privacy and security. Most voice assistants rely on cloud-based processing, which means that our voice data is being transmitted and stored on remote servers. This poses a significant risk of data breaches and cyber attacks, putting our personal information at risk. Offline voice assistants, on the other hand, process voice data locally on the device, eliminating the need for cloud-based processing and reducing the risk of data breaches. - Convenience and Accessibility:

Offline voice assistants can provide a more convenient and accessible experience for users. With the ability to perform tasks without an internet connection, users can access their voice assistant anytime, anywhere. This is particularly useful for those who live in areas with limited internet connectivity or for those who travel frequently. - Enhanced User Experience:

Offline voice assistants can provide a more seamless and intuitive user experience. With the ability to process voice data locally, offline voice assistants can respond faster and more accurately, reducing the latency and improving the overall user experience. - Cost-Effective:

Offline voice assistants can be more cost-effective than their cloud-based counterparts. With the ability to process voice data locally, there’s no need for expensive cloud-based processing or data storage, reducing the overall cost of ownership. - Scalability:

Offline voice assistants can be scaled down to fit into smaller devices, making them more versatile and accessible. This can enable the integration of voice assistants into a wider range of devices, including smart home devices, wearables, and other IoT devices. - Future of Voice Commerce:

Offline voice assistants can pave the way for the future of voice commerce. With the ability to process voice data locally, offline voice assistants can enable secure and private voice transactions, providing a more secure and convenient way to shop online.

How Do Big Tech Virtual Voice Assistant Work?

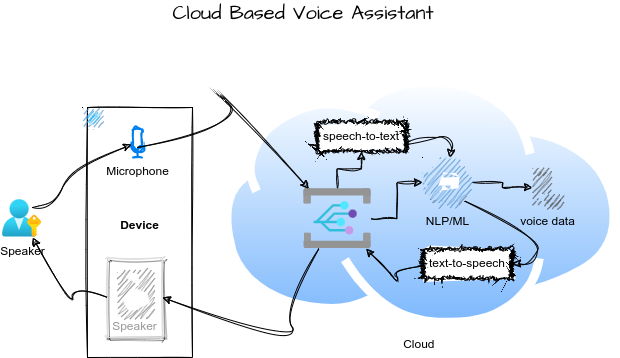

Let’s use Siri vs Alexa as an example, both work by using a combination of advanced technologies to understand and respond to the user’s voice commands. The main technologies they uses are:

- Voice recognition: detect human speech is core functionality utilizing technology of voice recognition — converting our voice into text that the computer can understand.

- Natural Language Processing (NLP)speaker: converting speech to text words as we speak is not enough, virtual assistants need to understand the meaning of our questions or prompts, providing context and allowing it to respond in a way that’s relevant to us.

- Machine Learning: capture user interactions and get better at understanding our requests and providing accurate responses. This means that the more you use Siri or Alexa the more it will understand you and the better it will become at assisting you. Also, this raised privacy concerns for consumers because they heard everything you said unless switched off and this problem magnified for children playing with voice assistants.

- Cloud-based servers: All voice processing takes place on cloud-based servers, which process our voice requests and provide responses in real-time. Using a massive computing power to make faster and more accurate responses, ensuring that we get the information we need, when we need it.

Put it all in a single diagram, at high level looks like this…

Among the top voice assistant companies in 2023, Google Assistant is the most popular with US consumers at 85.4 million users, followed by Apple’s Siri (81.1 million) and Amazon’s Alexa (73.7 million). (source: https://www.insiderintelligence.com/insights/voice-assistants/)

How can we shrink the entire voice solution to run locally for FREE?

The convergence of advancements in device technology and generative AI has created opportunities to streamline virtual assistant solutions onto a single consumer-grade device, either in offline mode or a scalable distributed setup, without jeopardizing the security of voice data. Despide of privacy concerns we still love to use Voice Assistant… because they are designed to help us to interact with our smart workspace or home quickly and easily.

The design thinking of an advance AI based offline voice assistant

The simplest way is running an advanced AI based voice assistant with an all-in-one approach with a single click to launch the assistant locally. See something like this

And in case of this running for multiple users, it be configure to run in distributed mode, with hardware optimized for serving a specific service such as running LLM with a GPU for faster inference or loading of specific output voice.

As you see no data leak, on-top of that we will leverage retrieval augmented generation (RAG) , make our private data and knowledge available to query, and add AI agents to do work for us. something is not possible with the big tech cloud based virtual assistant. It’s FREE, since we will use open source large language models to act as the brain and knowledge worker to handle user interaction and leverage state-of-the-art neural networks to handle speech to text and text to voice conversion… Moreover, in this solution the speaker can speak one language then LLM can respond in another language for mixed language chatting.

In the next post, I will share the code and how I am building the solution and progress made for my own personal use to take care of routine tasks because offline voice assistants offer a safer, more convenient, and more cost-effective solution for voice technology. With the ability to process voice data locally, family friendly and enterprise grade privacy capability

Conclusion

Offline voice assistants offer a safer, more convenient, and more cost-effective solution for voice technology. With the ability to process voice data locally, offline voice assistants can provide a more seamless and intuitive user experience, while also addressing the concerns over data privacy and security. As the technology continues to advance, offline voice assistants are poised to revolutionize the way we interact with technology and pave the way for the future of voice commerce to explore use of voice to manage transactions.

github code: https://github.com/PavAI-Research/pavai-c3po

Hope you like the idea of running a powerful AI based Voice Assistant built with state-of-the-art voice neutral network, combine with generative AI LLM/GPT and AI agents running on your own laptop for FREE.

Cheers,

Have a great day!